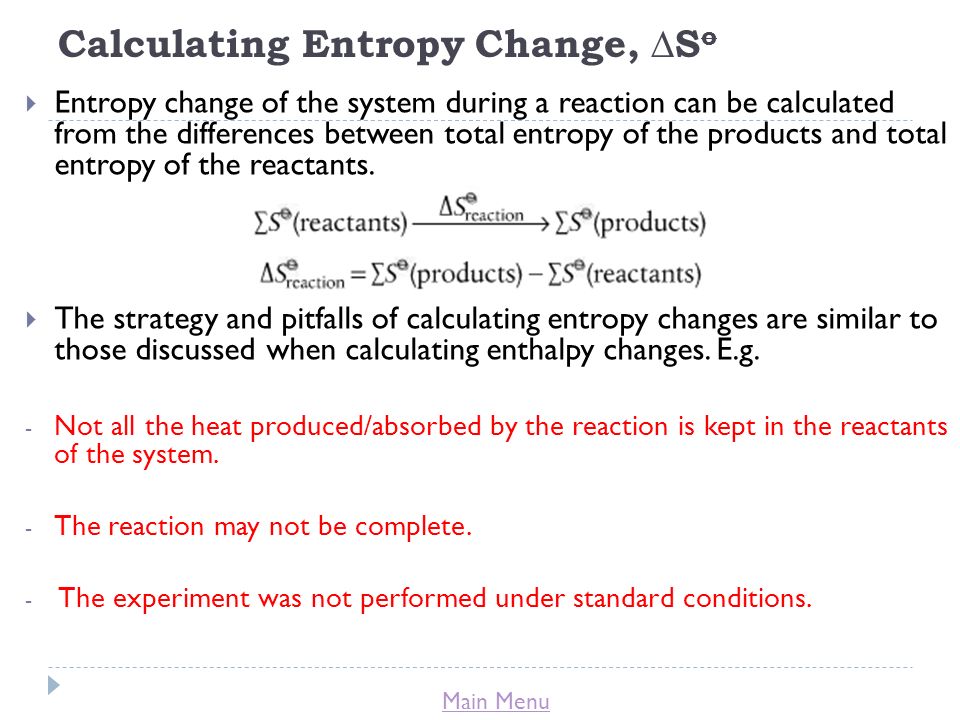

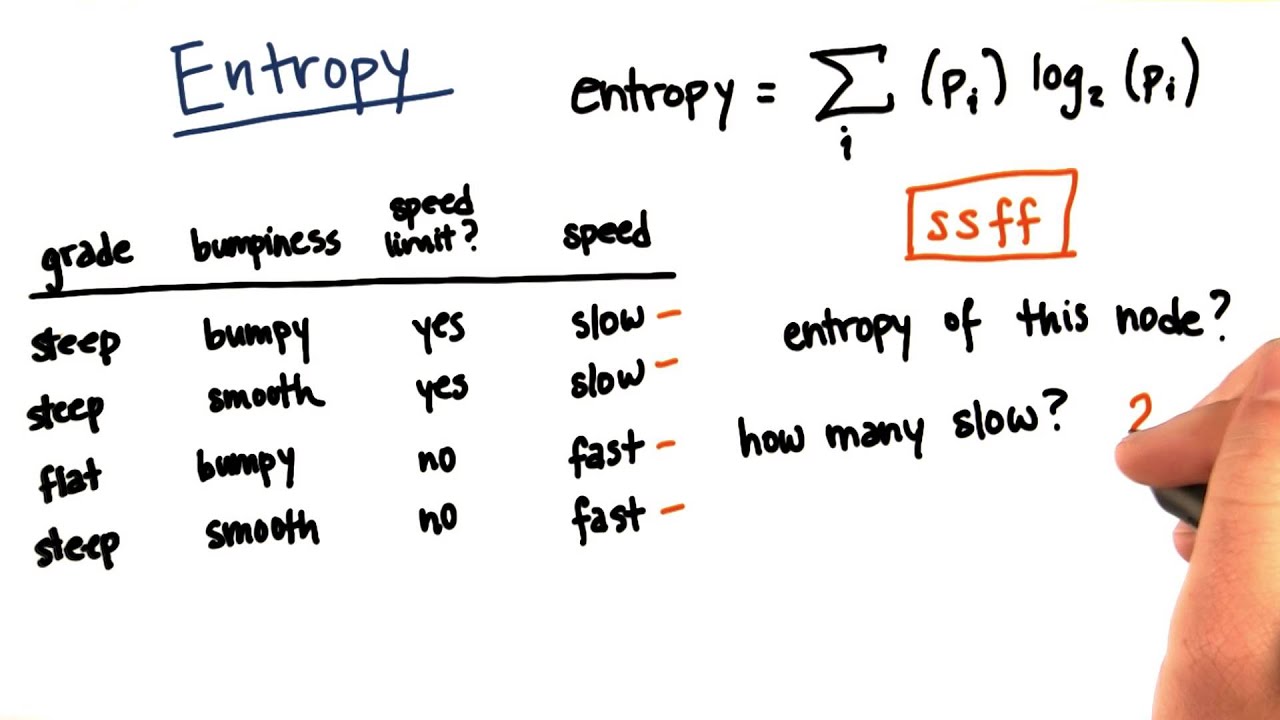

Furthermore, with some simple modifications of the PNG file, we can also estimate the evolution of mutual information between a stimulus and the observed responses through different conditions. By simply saving the signal in PNG picture format and measuring the size of the file on the hard drive, we can estimate entropy changes through different conditions. In this article, we propose that application of entropy-encoding compression algorithms widely used in text and image compression fulfill these requirements. As such, there is a need for a simple, unbiased and computationally efficient tool for estimating the level of entropy and mutual information. Mathematical methods to overcome this so-called “sampling disaster” exist, but require significant expertise, great time and computational costs.

#Calculating entropy series

Yet the limited size and number of recordings one can collect in a series of experiments makes their calculation highly prone to sampling bias.

They can be applied to all types of data, capture non-linear interactions and are model independent.

2Laboratory of Synaptic Imaging, Department of Clinical and Experimental Epilepsy, UCL Queen Square Institute of Neurology, University College London, London, United KingdomĬalculations of entropy of a signal or mutual information between two variables are valuable analytical tools in the field of neuroscience.1Lyon Neuroscience Research Center (CRNL), Inserm U1028, CNRS UMR 5292, Université Claude Bernard Lyon1, Bron, France.